AI and the Human Responsibility That Comes With It

Artificial Intelligence is no longer a futuristic concept. It is the quiet engine powering much of modern life. It writes emails, summarizes meetings, suggests purchases, and drafts reports. The experience is seamless. It is efficient. At times, it feels almost like magic.

A fundamental truth sits beneath that convenience: AI does not remove responsibility. It redistributes it.

At Jay Secures™, technology is viewed through a human-centered security lens. The mission is simple: help leaders, teams, and individuals use powerful tools wisely without losing sight of the risks they may not see. AI can amplify capability, but human behavior still determines whether that power strengthens protection or quietly opens a door to risk.

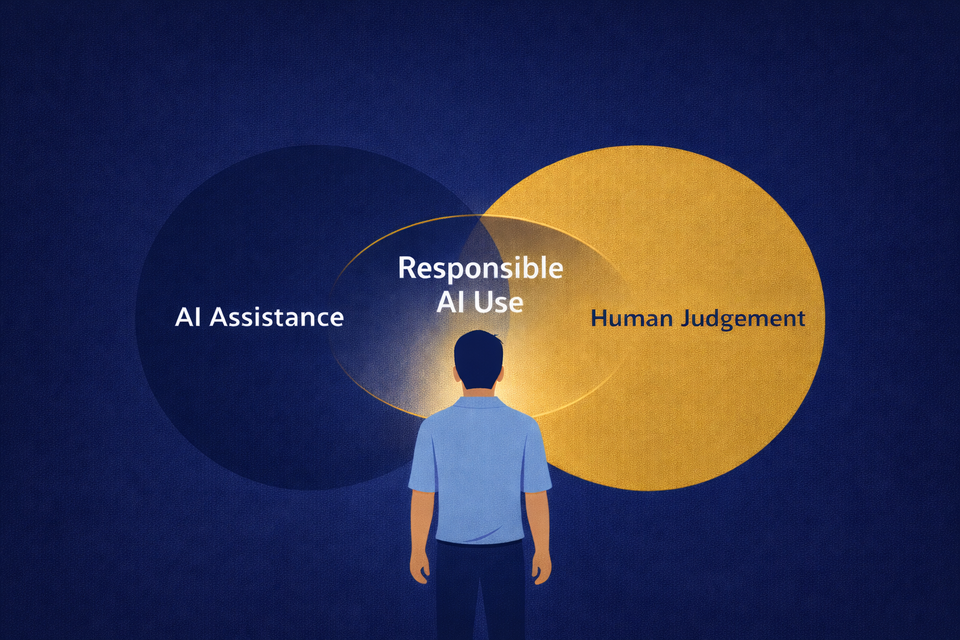

AI Is Assistance. Judgment Is Ownership.

AI can process data at speeds no human can match. It can generate polished, authoritative output in seconds. The technology is impressive, yet it remains incomplete in three important ways:

- Values: AI cannot distinguish between what is efficient and what is ethical.

- Context: AI cannot understand the nuances of your organization, your relationships, or the situation surrounding a decision.

- Accountability: An algorithm cannot stand behind a mistake.

Authority in tone does not guarantee accuracy in substance. Speed in response does not equal depth of understanding. Whether someone is a CEO, a student, or an intern, the final decision always belongs to a person. Every prompt entered, every output accepted, and every action taken reflects human ownership.

The Real Source of AI Risk

Many people assume AI risk begins with hackers or malicious misuse. In reality, most exposure begins with everyday behavior. It often appears through what security professionals call Shadow AI, when individuals use unsanctioned tools simply to work faster.

Risk grows quietly when convenience begins to replace judgment.

Sensitive company material might be pasted into a public AI system to troubleshoot a problem. A student might submit AI-generated homework without verifying the facts. A parent or child might share personal information with a chatbot without realizing the conversation could be stored or reused. AI-generated summaries might be shared without checking the original sources. Content that sounds convincing might be published without confirming its accuracy.

None of these actions feel dramatic in the moment. They often feel efficient or helpful. The risk only becomes visible afterward. AI makes it easier to move quickly, and speed without review can expose information, spread errors, and damage trust.

A Simple Framework for Responsible AI Use

Responsible AI use does not require advanced technical knowledge. It begins with a few intentional habits. Before sharing or acting on AI-generated output, pause and run a simple mental check:

- Verify: Is the information accurate? Confirm key facts using trusted sources.

- Voice: Does the output reflect your thinking, your judgment, or your organization’s values? AI can assist expression but should not replace your perspective.

- Visibility: Where is the information going? Ensure the data being shared is appropriate for the system receiving it.

These three questions help bridge the gap between technical security controls and everyday decision-making.

The Hidden Threat: Cognitive Atrophy

Another risk receives far less attention. Heavy reliance on AI can gradually weaken active thinking. When every message is drafted by an algorithm and every article summarized automatically, the mental effort required to analyze and interpret information begins to fade.

Over time, assistance becomes habit. Habit can quietly turn into dependency.

Intellectual resilience remains an essential part of security. A useful test is simple: If the reasoning behind an AI-generated answer cannot be explained in your own words, deeper review is required. AI should expand capability, not replace comprehension.

AI Reflects Your Culture

Technology functions like a mirror. It amplifies the habits that already exist.

- Individuals who prioritize speed over accuracy will find themselves making faster mistakes.

- Teams with unclear boundaries around data will see those gaps exposed.

- Organizations without defined accountability will struggle to determine who owns the outcome of automated decisions.

AI does not create culture. It reveals it. Responsible adoption begins with clarity. People need to understand what information must remain private, when human review is required, and who ultimately owns the final decision when AI is involved.

When those expectations are clear, AI becomes a powerful tool for productivity and innovation. When they are not, the same technology quietly magnifies confusion, risk, and misplaced trust.

The Future Is Behavioral

Artificial Intelligence will continue to evolve. Tools will become more capable and more autonomous. Technical progress is certain. The more important question is whether human habits evolve alongside it.

The long-term impact of AI will not be determined by algorithms alone. It will be shaped by the behavior of the people using them. When AI is used thoughtfully, it becomes leverage. When it is used passively, it amplifies existing weaknesses.

At the end of the day, responsibility remains human. Always.